I have posted a few times here about a rich dataset that I have.

Data were obtained from Stanford’s SNAP data repository of Amazon.com reviews that gave daily misspelling rates; astronomical data were from Wolfram’s Mathematica software and its astronomy resources.

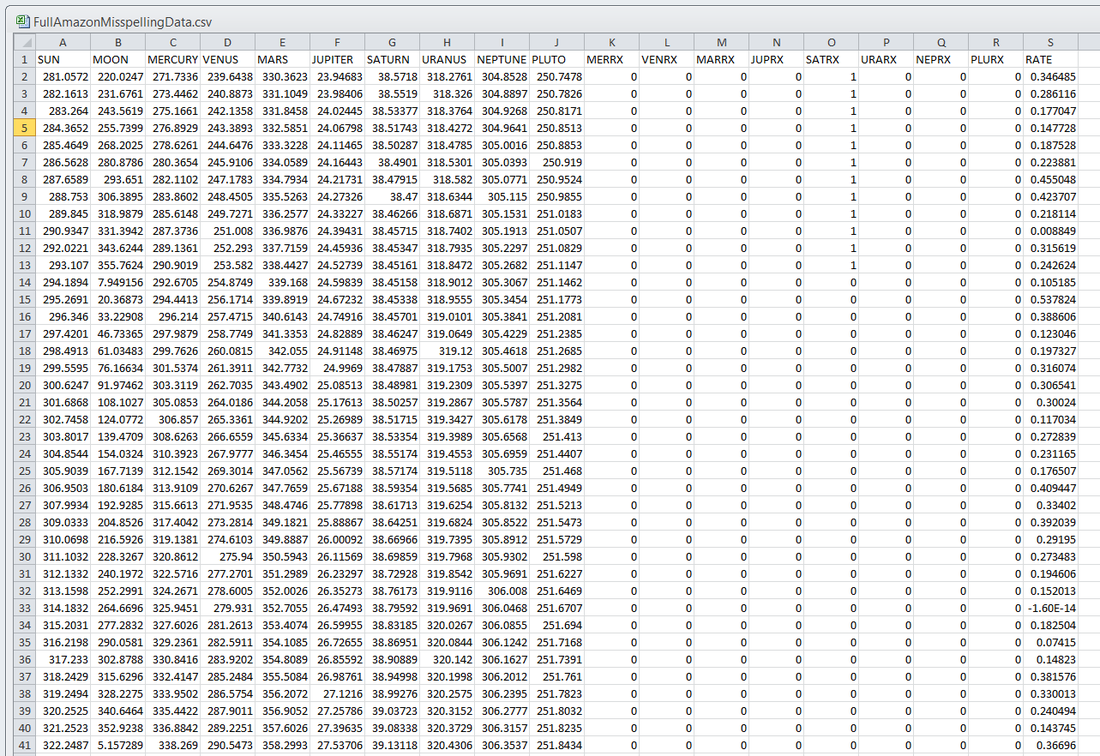

Here is what the dataset looks like:

Each of the 5296 rows represents a sequential day in a 14.5-year span of Amazon review misspelling rates during Jan 1, 2000 to Jul 1, 2014.

Across the top are the labels. In each column is a simple, stable, linear function of the right ascension (i.e., the astrological Tropical degree) of the planet, moon, or star at midnight at the start of that day in London, UK. Retrogressions of the planets are also included.

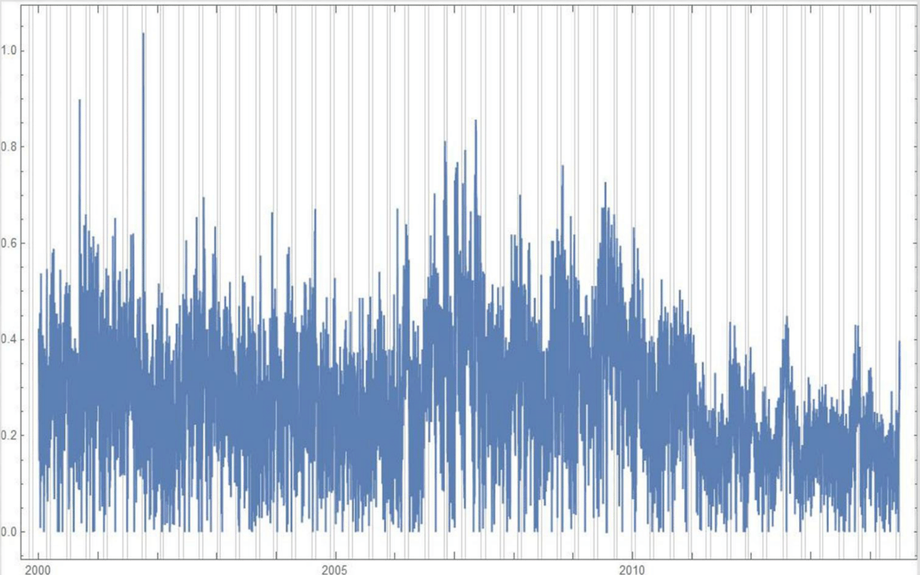

The final column is the log of difference of the misspelling rate of the day from the 27-day SNIP baseline. (The Moon’s right ascension completes its cycle every 27 and change days — the shortest cycle for any of the right ascensions.) Thus, it is the data over time minus its background noise. The following is a graph of this column’s data over time:

SNIP stands for Sensitive Nonlinear Iterative Peak-clipping algorithm. This method preserves any cyclic patterns — such as the planetary placements and retrogressions — while discarding “background noise” in the data, which would tend to obfuscate the patterns. The SNIP method is not subjective; it comes out of processing signals within spectra and is unprejudiced. It tends to preserve cyclic behavior in spectra.

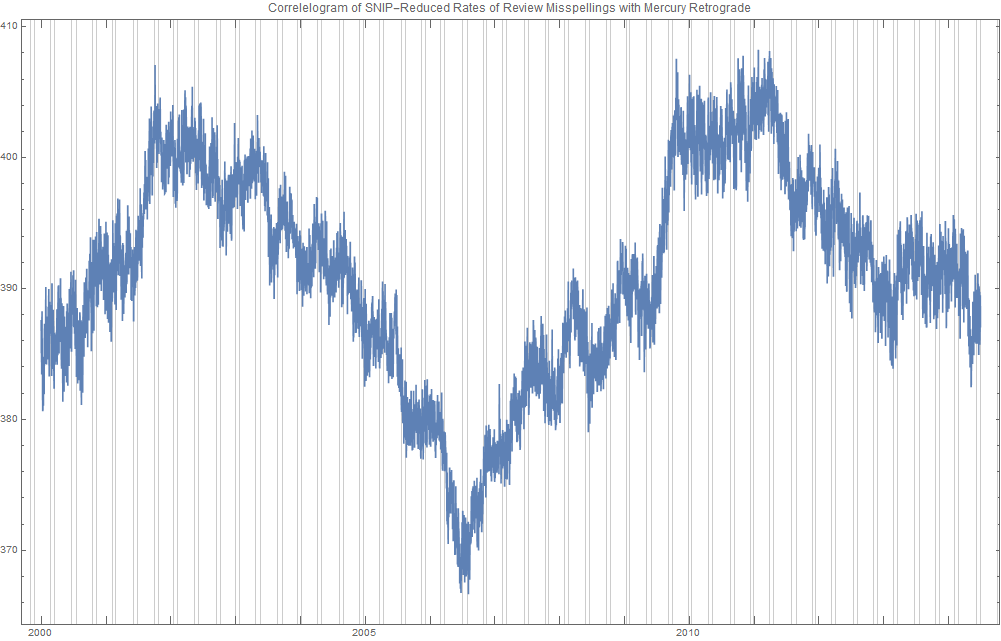

The apparent cyclicity hidden within this data is revealed via a correlogram:

The thin bands represent the start and end of Mercury retrograde across 14.5 years — Mercury retrograde analysis being the original motivator for acquiring this data.

For today’s study, the data for the first 80% of days were developed into a training group, and that of the subsequent 20% of days were isolated as a test group for prediction.

What was doing the training and testing? They were done entirely by an automated machine learning (AI) algorithm from BigML.com called DeepNet. DeepNet* was applied to the training set of the first 80% of days. This DeepNet was then tested or evaluated on the last 20% of days. The DeepNet is a hands-off technique offered to anyone for free.

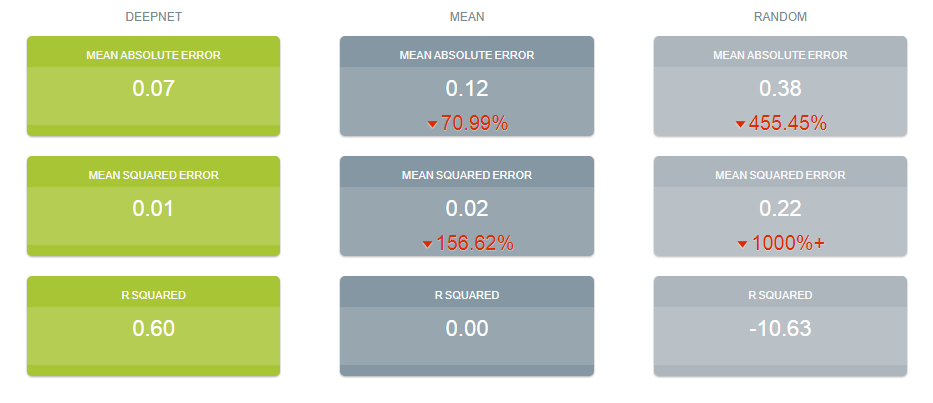

The chart below displays ridiculously good results as given in the usual AI industry way: the error rates for predictions for the 20% test group by DeepNet (in green) is dramatically smaller than other standard methods of prediction, in gray, which are based on the mean (average) rate of the training data or an approach assuming random chance. Moreover, the strong R-squared suggests good correlation of predicted misspelling rates to actual values only for the astronomical data of the DeepNet.

Let me summarize my take: future misspelling rates in Amazon reviews were successfully predicted using only basic astronomy data when compared to random values or when the average (mean) value was repeatedly applied. Moreover, there was a fine fit of correlation of the model’s predicted values to the actual values as shown by the R-squared.

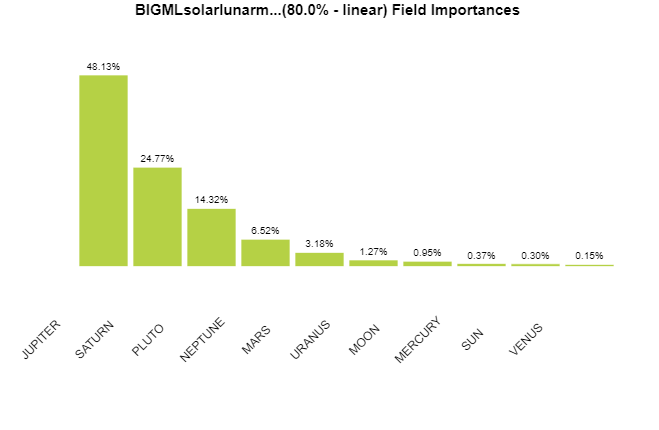

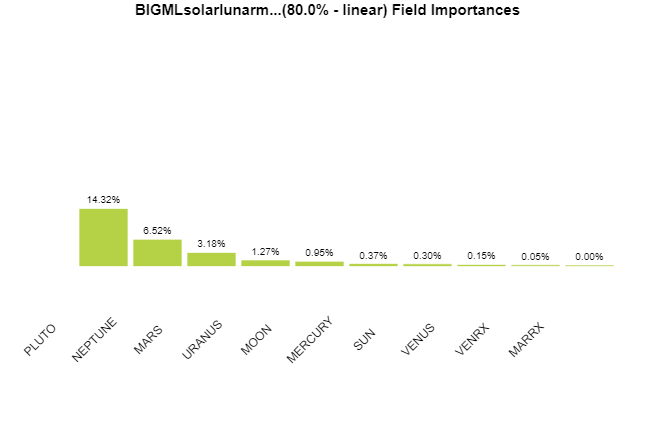

Here are the DeepNet fields in order of importance:

I am not even sure what to do next, but in case you do, here is the spreadsheet:

Download fullamazonmisspellingdata.csv (783 kb)Please let me know what you find, and please reference this post if you use the dataset.

* For an instructable on exactly how I did this, see here. Note that a linear split was used.